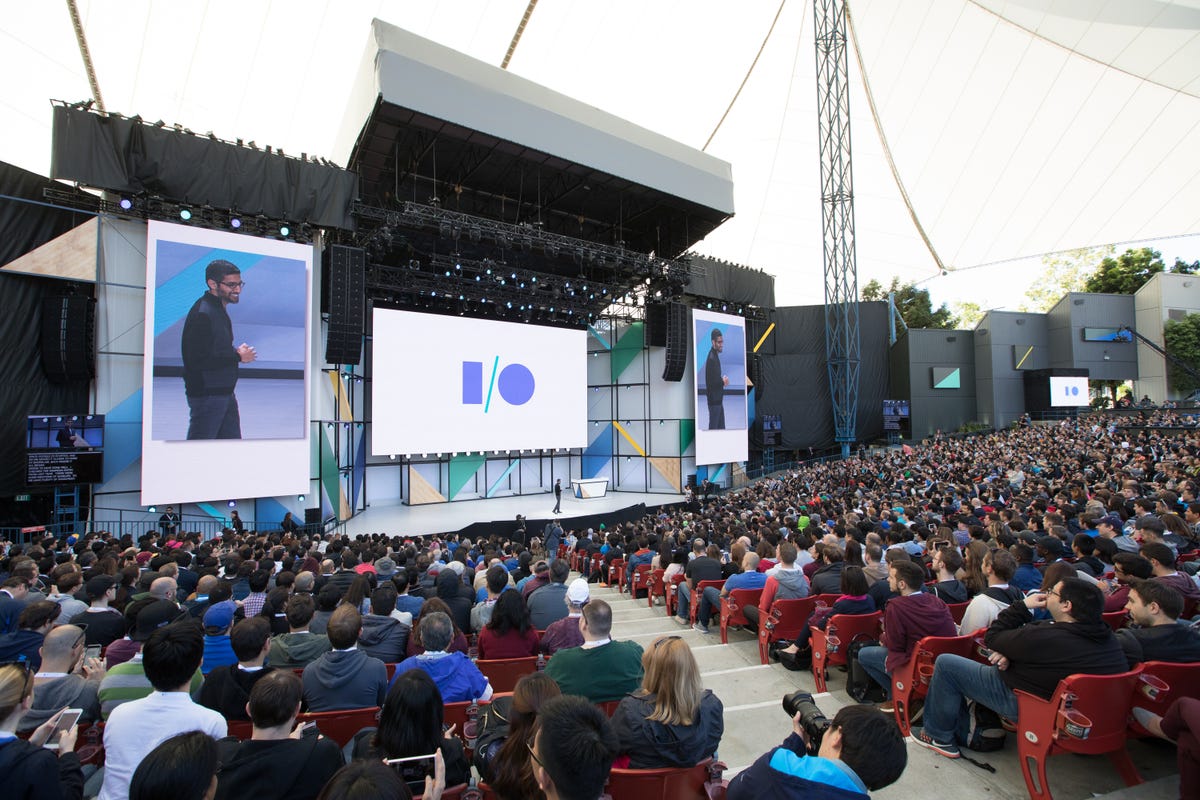

Google I/O 2017

On May 17, fans tuned in to watch the keynote address at Google I/O, the company's annual developer's conference. To read about all of today's Google announcements, check out CNET's coverage of Google I/O 2017.

Google I/O 2017

Android O will monitor your phone's "Vitals," such as speed, performance and battery life. You can read all about changes to Android O here.

Google I/O 2017

The five most important takeaways.

Google I/O 2017

In addition Google is releasingAndroid Go, a slimmed-down, less-demanding version of the operating system, to power devices in emerging markets.

Google I/O 2017

Twice-as-fast boot time...

Google I/O 2017

Google Assistant is now available on iOS, and Google released a developer's kit to bring it to a whole bunch of other devices.

Google I/O 2017

The Google Home, its smart speaker for Google Assistant, gets tons of new features.

Google I/O 2017

The Google Home, its smart speaker for Google Assistant, gets tons of new features.

Google I/O 2017

Visual Responses will send information to a relevant device for display, such as directions to your phone or a calendar to your Chromecast for display on your TV.

Google I/O 2017

The Google Home will also sport Spotify integration (as well as SoundCloud and Deezer) and Bluetooth support. It can launch HBO Now as well.

Google I/O 2017

The Google Home can speak a lot of different tech dialects.

Google I/O 2017

It's also a way to let you interact with streaming services without having to talk to your TV.

Google I/O 2017

The Google Home now will have "proactive assistance," otherwise known as push notifications, hands-free free calling (outgoing only, to start), Spotify integration (as well as SoundCloud and Deezer) and Bluetooth support. It can launch HBO Now as well.

Google I/O 2017

Combining use of GPS and the intelligent learning of Google Lens enables you to get quick information like restaurant reviews just by pointing your phone's camera at the options you see nearby on the street.

Google I/O 2017

Wanna know what flower you're looking at without fumbling with some sort of identification app? Just point your camera and ask. Google will tell you.

Google I/O 2017

Here the presenter demonstrated securing concert tickets and marking his calendar, all through the voice assistant.

Google I/O 2017

Google Photos got some major attention too. With machine learning and facial recognition working to classify all the photos you take, they aim to increase the likelihood of sharing the photos you take with those who might be in them.

Google I/O 2017

You can even share a photo feed with your spouse or family, synchronizing all, some, or just new photos taken on your phone with certain people in them. This streamlines sharing, but could be irritating if you don't particularly want to see every food or cat photo your spouse takes.

Google I/O 2017

Google pulled up all the photos it recognized as containing the people in question, and then decided which ones would be best in the book. It even did a layout for him.

Google I/O 2017

Google is launching a photo book service, too. Tell Google what you want the subject of your book to be. In this case, the presenter used a Mother's Day book of photos of his wife and children as an example.

Google I/O 2017

To read about all of today's Google announcements, check out CNET's coverage of Google I/O 2017.

Google I/O 2017

The company announced several new efforts. Google.ai is a spinoff division to encompass learning systems, research tools and applied AI to inform all of its work.

Google I/O 2017

This new chip, delivering an impressive 180 teraflops of computing power, both runs and trains machine-learning.

Google I/O 2017

This slide visualizes the training of machine learning to recognize the difference between a cat and a dog in photographs it encounters.

Google I/O 2017

Google Lens is a new recognition engine that enables intelligent mixed reality -- performing text and object recognition and feeding it into other apps to act upon, such as using the camera to view your router serial numbers and automatically provide related links.

Google I/O 2017

As you can imagine, the more photos are fed into Google, the better the AI gets over time at recognizing various subjects.

Google I/O 2017

Same thing with speech recognition. The more we use Google Assistant, the better it gets at distinguishing our individual voices and deciphering our speech, even in noisy environments.

Google I/O 2017

Google wants to push you to share more, adding Suggested Sharing to Google Photos to remind you to share and recommend who to share with, plus a feed of shared images.

Google I/O 2017

Following its Daydream View phone-based headset from 2016, which has garnered new phone partners, Google announced an unnamed Daydream-compatible headset.

Google I/O 2017

It doesn't require a phone or computer, but will be a standalone wireless VR headset with built-in positional tracking. We'll see devices later this year.

Google I/O 2017

It also mentioned its visual positioning service, which uses Project Tango technology to locate yourself indoors to a finer degree than GPS.

Google I/O 2017

Read all about the standalone headsets due from HTC and Lenovo here on CNET.

Google I/O 2017

He didn't say how much these headsets will cost, nor what they'll be called. But they will be available by the end of the 2017.