Tesla explains how the Full Self-Driving sausage is made

During its AI Day stream, Tesla's engineers demonstrated exactly how the so-called Full Self-Driving system "sees" the world and makes driving decisions.

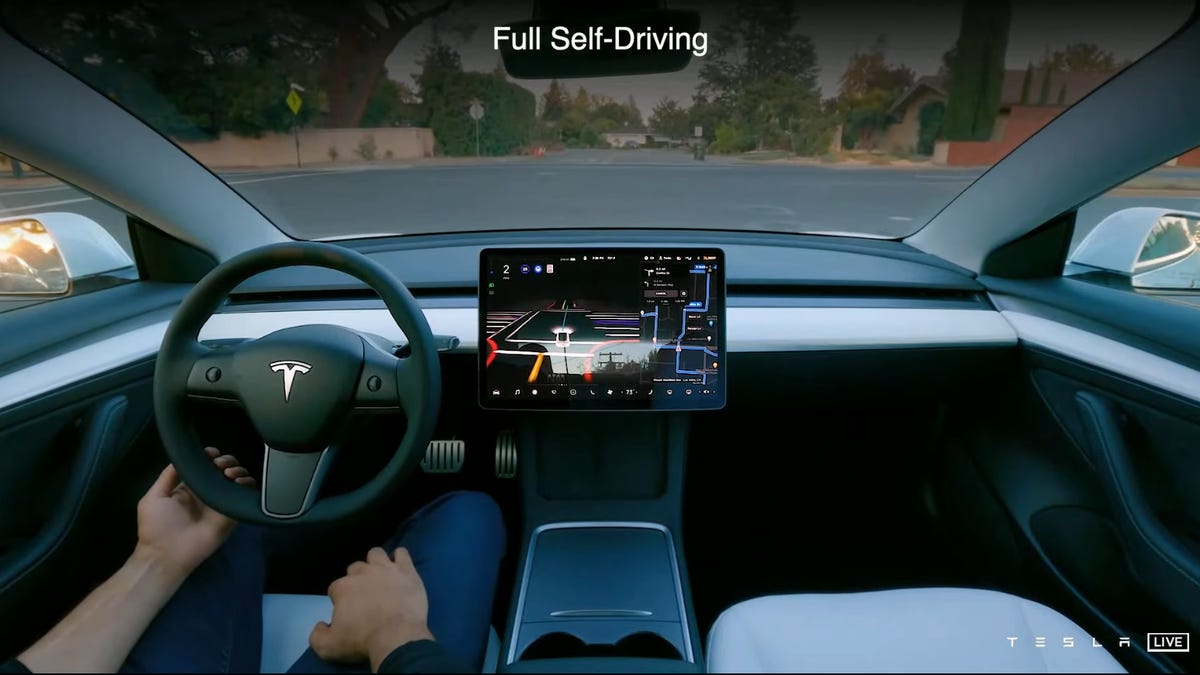

After a late start, Tesla's AI Day event kicked off Thursday evening with a video demonstration of the company's upcoming Full Self-Driving system following a navigation route on suburban roads. During the demo, a driver set a destination on the car's navigation system, double clicked the stalk on the steering column and the vehicle appeared to then pull into traffic and negotiate intersections with stop signs and traffic lights on its own. Along the way, it avoided pedestrians, making both right and left-hand turns. The driver, meanwhile, kept their fingertips lightly on the steering wheel as it spun around.

Right now, Tesla's Full Self-Driving system is still technically not actually fully self-driving, but company CEO Elon Musk is bullish that this technology will not only advance, but will eventually be better than the average driver.

"I'm confident that our hardware 3 Full Self-Driving computer 1 will be able to achieve full self-driving at a safety level much greater than a human," Musk said. "At least 200% or 300% better than a human. Then, obviously, there will be a hardware 4 FSD computer 2, which we'll probably introduce with Cybertruck -- so maybe in about a year or so. That will be about four times more capable, roughly."

The bulk of the AI Day presentation was dedicated to how Tesla's artificial intelligence engineers are working to improve the comfort and safety of the FSD system. It starts with a three-dimensional Vector Space that is created as the vehicle senses its environment through its eight cameras. These eight feeds are corrected and then fused into a single virtual environmental prediction model that gives the car's computers a bird's-eye view of the world it's navigating. Think less 360-degree camera system and more Tron-like 3D recreation of the local space.

Tesla's engineers demonstrated how the Vector Space model (lower right) results in more accurate object and environment recognition than their old, raster-based system (lower left).

Part of the processed Vector Space data is actually visible on the dashboard of the Tesla demonstration video, with road data, vehicles and pedestrians rendered in simple detail on the central screen.

Tesla's engineers also explained the new methods of environmental recognition and detection they're using to help boost the accuracy of the Vector Space map and the precision of navigation. For example, the AI can cache information in a short-term memory to retain the position of vehicles waiting at an intersection, even as they're momentarily blocked by cross-traffic. The system is also able to remember and predict the position of a leading car on the highway, even if its vision is temporarily blocked by snow or water spray, and even without the aid of radar distance data.

The Vector Space data is used by what Tesla calls the Neural Net Planner, a collection of AI algorithms that handle the actual routing, trajectory and behavior of the car on the road when using FSD. The Planner handles every turn, every swerve around pedestrians and every lane change. In addition to running thousands of simulations per minute to decide its own best course of action, it also has to simulate and predict the behaviors of other cars, pedestrians and cyclists.

Tesla's Neural Net Planner is able to remember objects when they're blocked and predict the trajectory of vehicles and pedestrians.

In one example, a Tesla running FSD encounters another vehicle approaching as it squeezes down a narrow road with cars parked on both sides. The system is able to decide whether to yield to the oncoming vehicle based on its speed, path and the predicted intentions of the driver. And when that driver changes their mind and slows for the Tesla to pass, FSD reacts, going from yielding to taking the right of way and rather smoothly avoiding the annoying "no you go, no I'll go" back and forth.

All of this processing happens in the vehicle, but the last piece of the puzzle that helps the system run efficiently is training and simulation. That happens off the road in Tesla's data centers where the FSD software is trained and sensor data is analyzed to classify and label millions of objects. Label data and behavior models are then used to improve the in-car processing of individual vehicles.

The Dojo training tile is composed of tiled D1 compute modules. Multiple Dojo tiles combine to create the ExaPOD.

To crunch all of that simulation data on a large scale, Tesla is working on Project Dojo, its own in-house silicon specifically designed for AI training. The project starts with Tesla's D1 distributed computing chip which is tiled to build Dojo units which are again tiled to create what Tesla calls the ExaPOD, a room-sized 1.1 exaflop processing unit. For scale, that's as much processing horsepower as 30,500 Nvidia RTX 3090 GPUs, but with the additional advantage of being custom built for AI training.

All of that data center computing power will hopefully help accelerate development of the FSD AI in Tesla cars and power artificial intelligence and robotics projects beyond the automobile, like the Tesla Bot Musk teased at the end of the presentation.