Researchers discover how to sabotage Tesla's Autopilot system

But don't expect this to become the next big thing in hacking.

There's a whole load of electronic doodaddery behind that bumper, and most of it is susceptible to sabotage.

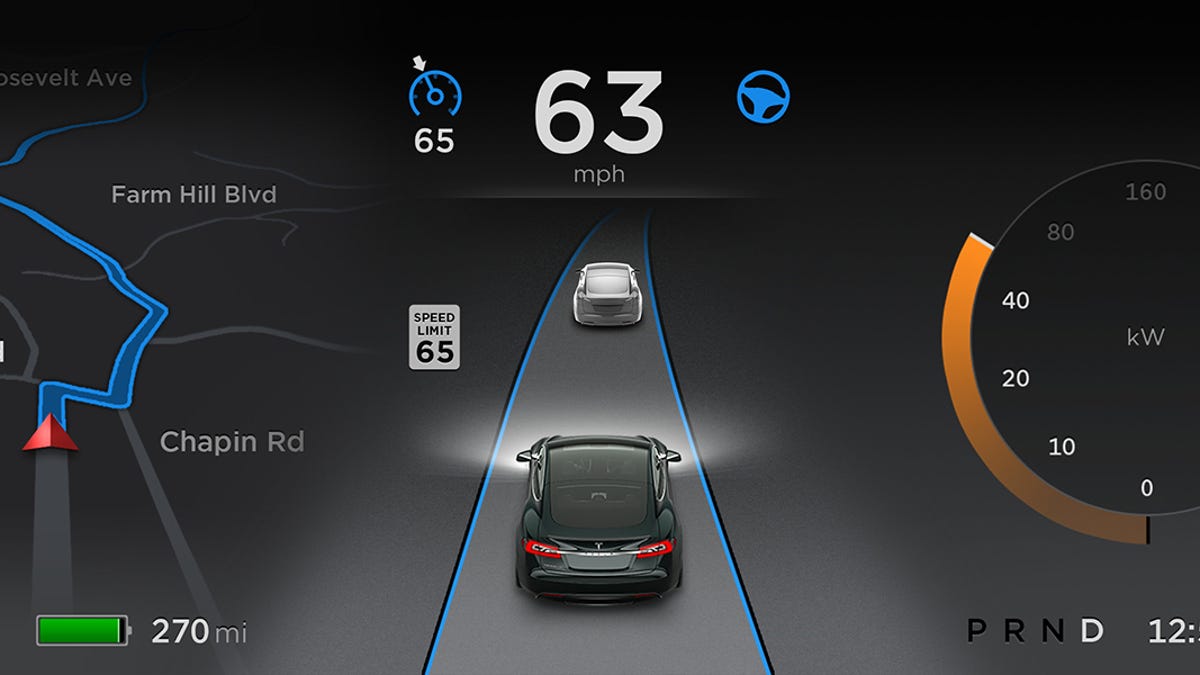

Sabotaging a car's electronics is quite the tricky feat, but in an era where cars are basically computers on wheels, it's not as tough as one might think. Hackers made high-profile news last year when they discovered how to screw with a Jeep Cherokee. Now, a group of researchers have figured out a way to intentionally sabotage Tesla's Autopilot system.

Researchers from the University of South Carolina, Zhejiang University and Qihoo 360 (a Chinese security firm) have figured out how to purposefully confuse Autopilot's sensors, Wired reports. The group, which used off-the-shelf products to carry out the hack, will report its findings at the Defcon hacker conference.

The group was able to convince Autopilot that an object existed when it did not, and also tricked the semi-autonomous driver-assistance system into believing that an object didn't exist when it did. In theory, that could lead Autopilot to drive incorrectly, potentially putting both passengers and the general public in danger.

Two pieces of radio equipment were used to convince Tesla's radar sensor that a cart was not placed directly in front of it. One of those pieces, a signal generator from Keysight Technologies, costs about $90,000. The group also tricked the car's short-range parking sensors into malfunctioning using about $40 worth of equipment.

Wired points out that this was, thankfully, a rather difficult feat. Most of the technological tomfoolery was done on a stationary car. Some of the required equipment was expensive, and it didn't always work. But it brings up an important point -- even though Autopilot is quite capable, there's still no substitute for an attentive human driver, ready to take control at a moment's notice.

I should also point out that this isn't a hack in the sense that vehicle access was required. No trickery inside the vehicle was required. It was a simple hijack of the waves emitted from the vehicle, be they physical sound waves (the ultrasonic parking sensors) or electromagnetic radio waves (radar). So, in theory, this could be applied to any vehicle emitting these waves, not just a Tesla Model S.

Unless you're taking mushrooms, hackers won't be able to trick your eyes into seeing things that aren't there. And even then, it won't be hackers doing that, it'll be the mushrooms.

Update, 1:52 p.m. Eastern: Added clarification on the nature of the sabotage, and how it relates to not just Tesla's own vehicles.