Ever app trained facial recognition tech on users' photos, report says

The company, however, says images collected via the photo storage and backup app aren't used to train its enterprise facial recognition products.

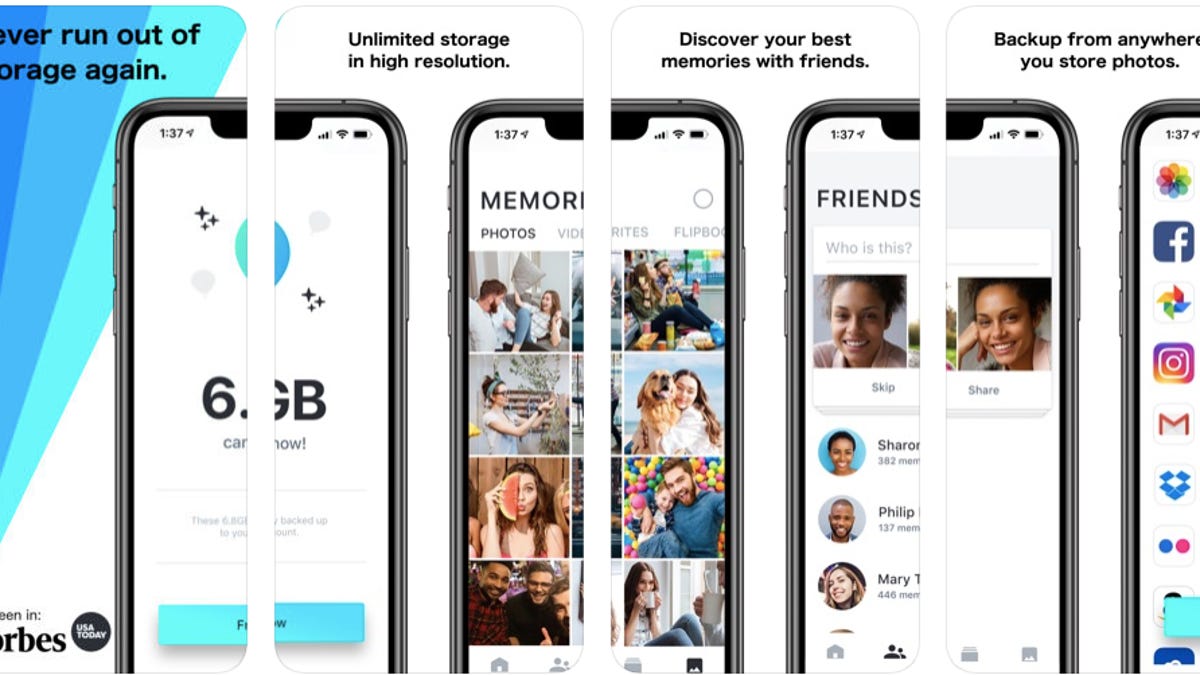

The Ever app for iOS. The company behind it wants to sell facial recognition products to private companies and law enforcement, reports NBC News.

Ever, a photo storage and backup app, reportedly used millions of images uploaded to the service to train a commercial facial recognition system that it offers to law enforcement and private companies. The problem, according to NBC News, Ever didn't clearly disclose this to users of the app.

Photos uploaded to the Ever app are used to train an algorithm that powers the company's facial recognition products, sold under Ever AI, NBC News reported on Thursday. This isn't obvious on Ever's website, according to NBC News, but the app reportedly updated its privacy policy in April with more information on how the company uses customers' photos. NBC News said it spoke with seven Ever users, and most didn't know their photos were being used to build facial recognition tech.

In an email, Ever AI CEO Doug Aley said the NBC News report is inaccurate and that the company isn't taking images collected via the Ever app and using them to train facial recognition on the Ever AI side.

NBC News didn't immediately respond to a request for comment.

Ever AI has contracts with private companies, including SoftBank Robotics, but it hasn't signed up any "law enforcement, military or national security agencies," according to NBC News.

Aley reportedly told NBC News that Ever AI doesn't share photos or identifying information about app users with its facial recognition customers.

The Ever app is available on Android and iOS, as well as Mac and Windows desktops .

Developers of facial recognition systems train the setups by feeding a software program millions of photos to teach the AI how to recognize people -- the more images, the better. But some facial recognition creators don't have enough images to train their software and have been grabbing them without people's consent. In March, NBC News found that IBM released nearly a million photos from Flickr to researchers without people's permission.

It's a privacy issue, and some people uploading their photos online may not want their images used to develop surveillance technology. Facial recognition is being used by police, at airports and in stores, as lawmakers raise concerns about how the technology is put into practice. San Francisco lawmakers have proposed legislation that could make SF the first US city to prohibit city agencies from using facial recognition.

Originally published May 9, 9:57 a.m. PT.

Updates, 11:21 a.m.: Adds details on facial recognition; 7:22 p.m.: Adds comment from Ever AI CEO Doug Aley.