Facebook adds a new tool to fight fake news: context

The social network has a fake-news problem -- and now a new tool to shoo away the hoaxes.

Facebook announced a new tool to help readers be more skeptical of fake news.

Facebook on Thursday introduced another tool to help fight fake news, adding to a long list of fixes that it's tried over the last year.

Mark Zuckerberg and the website he created have been at the center of the fake-news epidemic plaguing the internet. Within the last two weeks, the Facebook CEO has asked forgiveness for the division that the website's abetted and vowed to protect election integrity after finding 3,000 Russia-linked ads on the social network.

Over the last year, Facebook has created several tools, such as fact-check tags on articles and fake news warnings. None has been the cure Facebook sought.

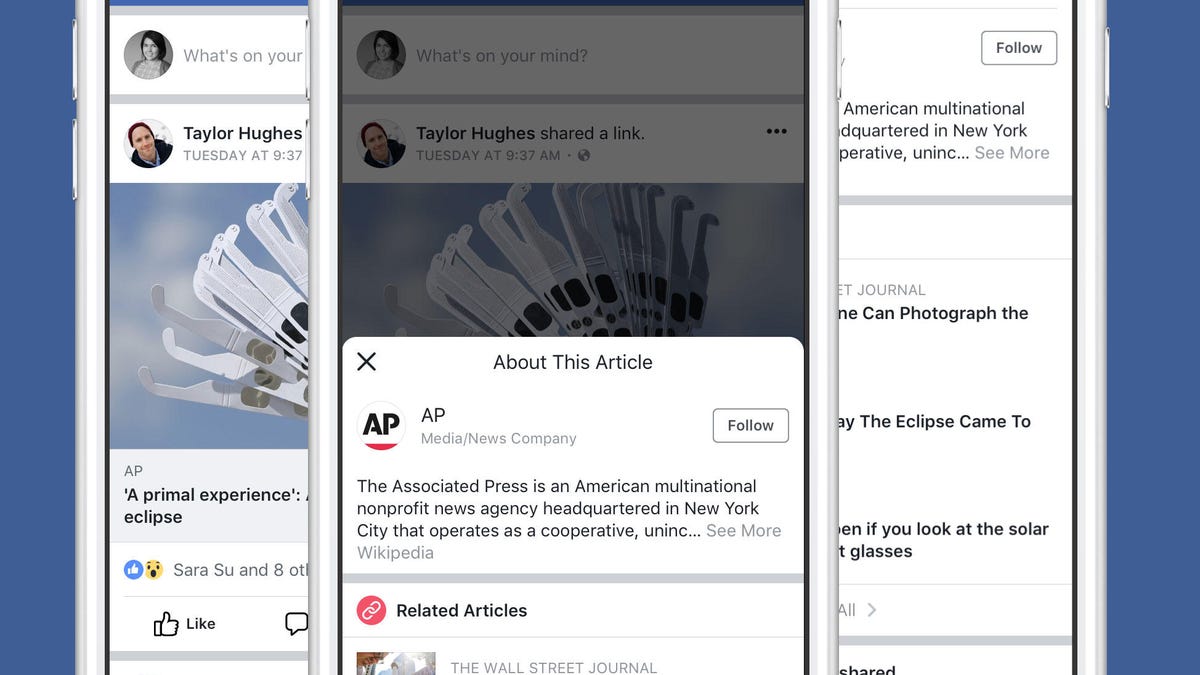

This time, the social network has created a feature that will show context on articles posted to your newsfeed. Next to posted links, you'll find a button that will launch a pop-up window showing details about the article and its publisher.

It pulls descriptions of the website the article is linked from, scraping details from the publisher's Wikipedia entry, as well as other sources, Facebook said. So if you shared an article from CNET on Facebook, you could get a pop-up saying "CNET (stylized as c|net) is an American media website that publishes reviews, news, articles, blogs, podcasts and videos on technology and consumer electronics globally," as it's described in its Wikipedia entry.

Of course, that doesn't mean that Wikipedia is a foolproof check against fake news. The crowd-sourced encyclopedia can be edited by anyone, including people trying to spread hoaxes. Wikipedia's co-founder Jimmy Wales even created a project to take on fake news called Wikitribune.

But Facebook isn't concerned about Wikipedia edits.

"Editing Wikipedia entries is an issue that is usually resolved quickly," a Facebook rep said in an email. "We count on Wikipedia to promptly resolve such situations and refer you to them for information about their policies and programs that address vandalism."

Ideally, context is a helpful tool to make sure you're considering the source of what you're sharing. If no context is available, Facebook will let readers know that too, which it believes suggests they're reading a fake-news site.

"Helping people access this important contextual information can help them evaluate if articles are from a publisher they trust, and if the story itself is credible," Facebook said in a blog post published Thursday morning.

This update comes just three days after Facebook inadvertently contributed to the fake-news storm surrounding the Las Vegas mass shooting. On the Safety Check page for the incident, the top stories about the attacks for several hours came from websites like "TheAntiMedia.org" and "MyTodayTV.com," which asked for bitcoin donations.

If the context tool had been available, readers would've been better equipped to tell that neither of those were trusted sources.

As things were, people criticized Facebook for highlighting articles from questionable outlets:

Right now, FB's trending topic page for the Las Vegas shooting features two (2) posts from a Russian propaganda outlet. pic.twitter.com/jDR1V0zzPy

— Kevin Roose (@kevinroose) October 2, 2017

I find it a little disconcerting that the primary news source Facebook's algorithm is serving me about #LasVegasShooting is Russia Today pic.twitter.com/yLdWM1Rzf5

— dan michalski (@danmichalski) October 2, 2017

When asked about it, Facebook said it "deeply regret the confusion this caused."

The context could help. Or it could blow up in Facebook's face like previous tools have.

CNET Magazine: Check out a sample of the stories in CNET's newsstand edition.

Logging Out: Welcome to the crossroads of online life and the afterlife.