Deepfake detection contest winner still guesses wrong a third of the time

It's still hard to distinguish fake videos of people from the genuine article, a troubling finding given coming elections.

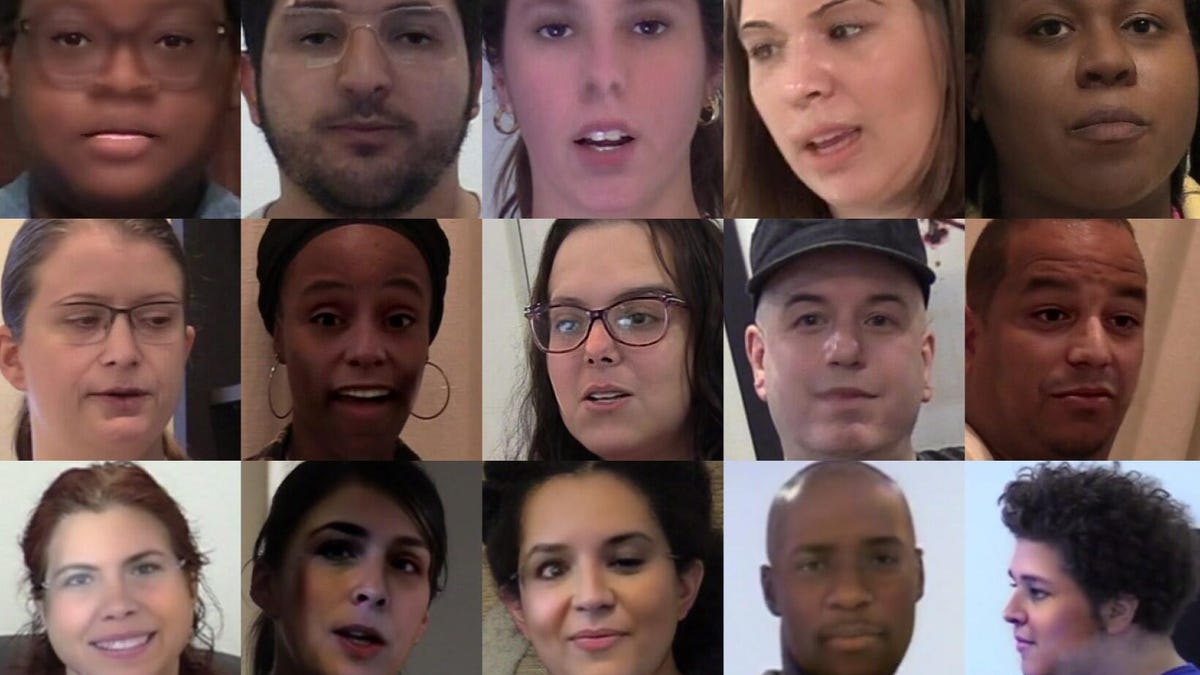

Some of the faces used in 100,000 videos the Deepfake Detection Contest. These deepfake images are more convincing farther to the right.

When looking at videos of people, it's hard to get a computer to distinguish a genuine video from a computer-generated fraud called a deepfake, a competition run by Facebook shows. For 2,114 participants who submitted 35,000 deepfake-detecting computer models, the average success rate was 70% and the best was 83%.

But those scores were using a public data set of 100,000 videos Facebook created for the competition, called the Deepfake Detection Challenge (DFDC). Using a separate collection of 100,000 previously unseen videos, plus some extra techniques to make them harder to judge, the best score was 65%.

AI technology has automated lots of tasks that previously were hard for computers, like transcribing human voices, screening out spam and adding effects that apply van Gogh styling to your selfie. One downside, though, is that the same technology can be used to create deepfakes, doing things like mapping one person's voice style and features to a video of another person. That could be a problem if an embarrassing gaffe by a political candidate goes viral on social media before it's debunked.

Microsoft, Amazon, Facebook and universities including MIT, Oxford, Cornwall and the University of California at Berkeley launched the competition in September 2019. Organizers paid 3,500 actors to generate source video that was then modified in various ways to generate the 100,000 public videos on which contestants could train their artificial intelligence models. The actors were selected to represent a variety of genders, skin tones, ethnicities, ages and other characteristics, Facebook said.

The Deepfake Detection Challenge results are significant given concerns that fake videos could mislead people in the runup to the US elections in November. Even if not many deepfakes are convincing and most are caught, the very existence of deepfakes could cause voters to doubt the credibility of videos they encounter and disrupt the election, experts fear.

Facebook thinks other cues could help deal with deepfakes.

"As the research community looks to build upon the results of the challenge, we should all think more broadly and consider solutions that go beyond analyzing images and videos," Facebook researchers said in a blog post. "Considering, provenance, and other signals may be the way to improve deepfake detection models."

And research into deepfake detection isn't over. Competition organizers plan to release the raw video source material -- all 38 days' worth -- for new work in the area.

"This will help AI researchers develop new generation and detection methods to advance the state of the art in this field," Facebook said.