Iowa State research to give UAV jockeys a virtual view of battle space

Real-time virtual view of the battle space allows single operator to control multiple unmanned aerial vehicles simultaneously, say researchers.

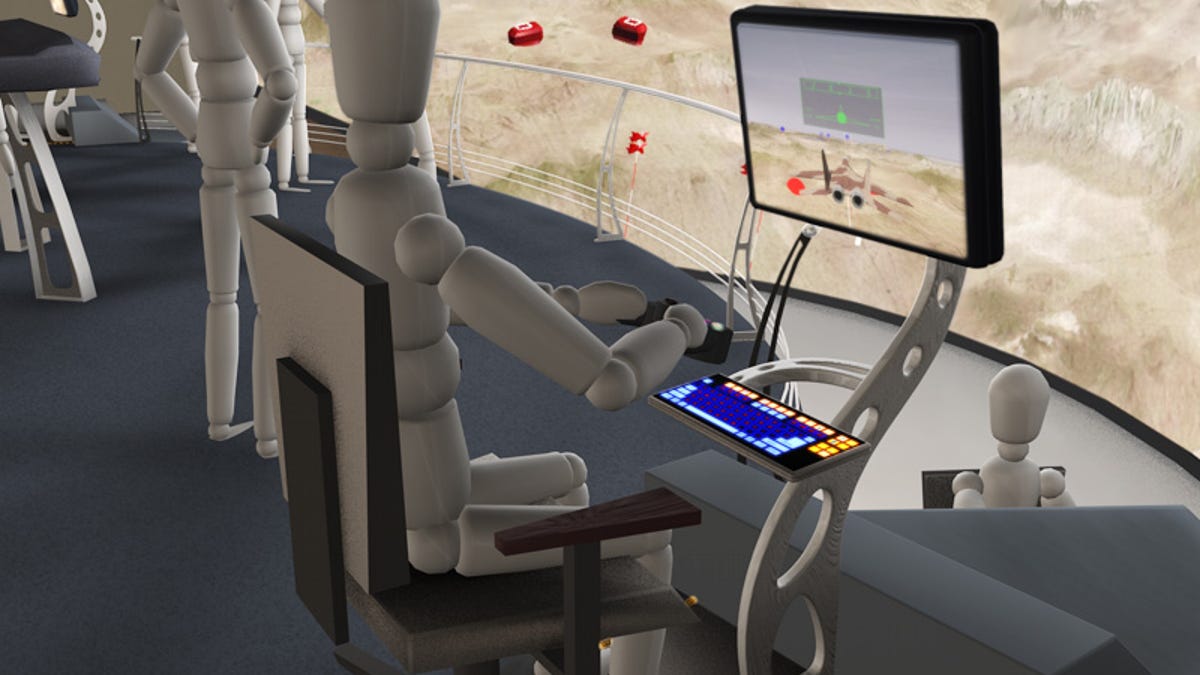

A team from Iowa State University is using virtual reality technology to develop "immersive" ground control stations that will give operators of military unmanned aircraft (UAV) an overall view of their planes and the battle space they are flying over.

The university's Virtual Reality Applications Center (VRAC) team is working under a $4.2 million contract as part of the U.S. Air Force Research Laboratory's effort to develop the "next generation control interface" for military UAVs. If successful, the real-time virtual view of the battle space will allow a single operator to control several UAVs simultaneously, all the while monitoring onboard instruments, cameras and weapons systems.

"We're also developing and measuring the effectiveness of new human interface techniques, which will enable operators to effectively control multiple, semi-autonomous aircraft. Already, up to 230 persons can be interfaced to participate in the system simultaneously," research leader Dr. James Oliver said in an interview with Space War.

The idea is to use novel eye-tracking and voice control technology to provide a shared, situational awareness interface, which robo plane crews can then monitor and interact with on large screen displays.

This approach inverts the typical paradigm for conveying information to UAV jockeys, according to VRAC. Because rather than augmenting the real-time camera picture with sensor generated information, the new interface works more like a virtual operating theater--one that's constantly fed by a myriad array of spatial and temporal information sources.