Nvidia Sees a Metaverse Populated With Lifelike Chatbot Avatars

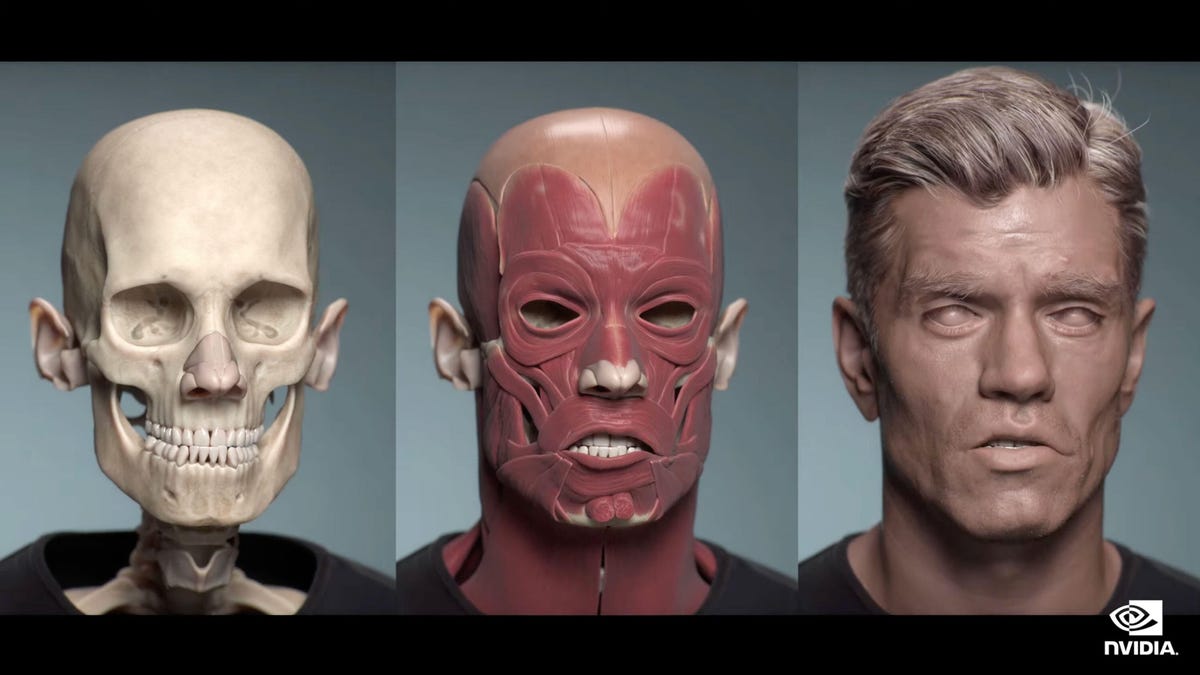

Nvidia builds its avatars from the skeleton and muscles up, to try to achieve realistic facial expressions that map to human speech and emotion.

What's happening

Nvidia announced technology to let metaverse developers create lifelike avatars that can give an animated human face to the computers that people will interact with online.

Why it matters

The metaverse needs new computing tools if it's to live up to its potential of new 3D realms for working, learning, socializing and goofing off, and Nvidia's technology could also eventually give humans a new look online, not just bots.

You're probably used to interacting by voice with digital assistants like Alexa and Siri. Now, Nvidia thinks those voices should have digital faces.

On Tuesday, the chip giant unveiled its Avatar Cloud Engine, a tool for building 3D models of speaking humans that Nvidia hopes will be the way we interact with computers and, perhaps, with other people in the metaverse.

The tool draws on Nvidia's experience with 3D graphics and artificial intelligence technology, which has revolutionized how computers understand and communicate with natural language. Company Chief Executive Jensen Huang unveiled ACE in conjunction with the Siggraph computer graphics conference in Vancouver.

Advanced avatars such as those ACE could make possible are the next step in computer interaction. In the 3D digital realms that metaverse advocates like Meta and Nvidia hope we'll all inhabit, a human-looking face could help us manage our investments, tour an apartment building or learn how to knit.

"These robots are … necessary for us as we create virtual worlds that become indistinguishable from the real one," Rev Lebaredian, Nvidia's vice president of simulation technology, said in a media briefing. The avatars are "on a path to pass the Turing test," meaning that humans won't be able to tell if they're talking to a human or a bot, he said.

To get to that future, though, Nvidia will face plenty of challenges. Chief among them is the "uncanny valley," in which digital representations of humans are a hackle-raising blend of real and artificial. To human brains accustomed to the real thing, not-quite-real simulations can come across as creepy, not convincing.

Another question is whether the metaverse will live up to today's hype. Nvidia sees the metaverse as a visually rich 3D successor to the web, and Facebook believes in the metaverse so strongly that it renamed itself Meta. So far, however, only 23% percent of US adults are familiar with the metaverse, and the number is even lower elsewhere, according to analyst firm Forrester.

Still, avatar technology could be central to how we'll present ourselves online, not just how we chat with bots. Today's grid of faces in a Zoom videoconference could become photorealistic 3D versions of ourselves seated around a virtual conference table in the metaverse. When it's time for something less serious, computers scanning our faces could apply our expressions instantly to the online personas others see, such as a cartoon character.

Nvidia sees avatars not just as animated faces but also as full-fledged robots that perceive what's going on, draw on their own knowledge, and act accordingly. Those smarts will make them richly interactive agents in the metaverse, Chief Executive Jensen Huang said in his Siggraph speech.

"Avatars will populate virtual worlds to help us create and build things, to be the brand ambassador and customer service agent, help you find something on a website, take your order at a drive-through, or recommend a retirement or insurance plan," Huang said.

Graphics chip designer Nvidia hopes its hardware and software will let people create lifelike, animated avatars.

To improve its avatars, Nvidia developed AI technology called Audio2Face that matches the avatar's expression to the words it's saying. A related Audio2Emotion tool changes facial expression according to its assessment of the feelings in the words, with control to let developers dial up the emotion or present a calm avatar.

It's all built on a 3D framework including the human skeleton and muscles, said Simon Yuen, senior director of avatar technology. Nvidia lets people drag a photo into the avatar model, which then creates a 3D model of that person on the fly. Nvidia also offers controls to create hair strand by strand, complete with the ability to cut and style it.

Nvidia has a lot riding on the technology. If the metaverse catches on, it could mean a big new market for 3D graphics processing coming at a time when its other businesses are threatened.

On Monday, Nvidia warned of worse than expected quarterly profits as consumers' economic worries tanked sales of video game hardware. The AI chips that Nvidia sells to data center customers didn't fare as well as hoped, either. No wonder Nvidia wants to see us all chatting with avatars in the metaverse.

The metaverse is "the next evolution of the internet," Huang said. "The metaverse is the internet in 3D, a network of connected, persistent, virtual worlds. The metaverse will extend 2D web pages into 3D spaces and worlds. Hyperlinking will evolve into hyperjumping between 3D worlds," he predicted.

One sticky problem with that vision is that there isn't yet a standard for developers to create metaverse realms the way they create web pages with the HTML standard. To solve that problem, Nvidia is backing and extending a format called Universal Scene Description originally created at movie animation studio Pixar.

Nvidia is working hard to beef up USD so it can handle complex, changing 3D worlds, not just the series of preplanned scenes that make up frames in a Pixar movie. Among Nvidia's other allies are Apple, Adobe, Epic Games, Autodesk, BMW, Walt Disney Animation Studios, Industrial Light and Magic, and Dreamworks.

Analyst firm ABI Research likes USD, too, saying Nvidia's Omniverse product for powering 3D realms has helped prove its worth and that other contenders haven't emerged despite lots of metaverse development.

"In the absence of a preexisting alternative, ABI Research agrees with Nvidia's position to make USD a core metaverse standard -- both on its technical merits and to accelerate the momentum around the buildup to the metaverse," ABI said in a statement.