Nvidia shows self-driving supercomputer at CES, names it Xavier

At CES 2017, Nvidia took the wraps off a new computer designed to enable self-driving cars, and announced partnerships with Audi, Bosch, ZF, Here and Zenrin.

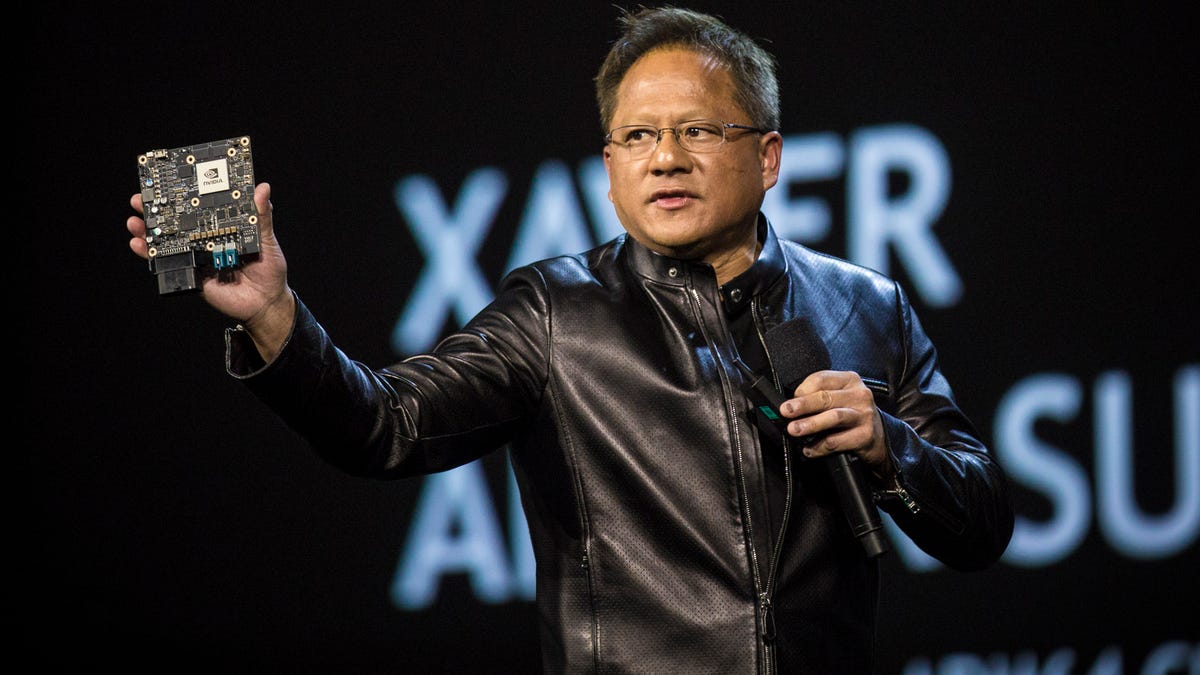

Nvidia CEO Jen-Hsun Huang holds up Xavier, a powerful computer designed to be the brains of self-driving cars.

Nvidia founder and CEO Jen-Hsun Huang used his keynote address during CES 2017 to show off a new computer, named Xavier, to enable self-driving cars , and to lay out how the company's self-driving car platform works.

Backing up his product announcements, Huang went on to call out new partnerships to cement Nvidia's place in the automotive industry.

"We would like to turn every car into an [artificial intelligence]," Huang said. To support that goal, he brought Scott Keogh, Audi of America's president, out onto the stage to announce a partnership that promises highly-automated Audi vehicles by 2020. The term highly-automated usually means cars that can drive themselves under certain circumstances, like while on certain freeways.

Automakers, automotive equipment suppliers and technology companies have all been working on self-driving car research. The goals around self-driving involve reducing or eliminating car accidents, which caused more than 30,000 deaths on US roads last year; reducing traffic, which wastes fuel and people's time; and enabling automated car sharing services, which could make it possible for many people to live without the expense of a car.

During his keynote, Huang said Nvidia has been developing self-driving technology for 10 years. Xavier will be a new generation of driving computer from Nvidia, following up on previous efforts. This computer would be the brains of a self-driving car, processing data from sensors, determining the car's location from maps and making driving decisions.

Xavier, a system on a chip, uses an octa-core ARM64 processor and Nvidia's new Volta graphics processing unit (GPU) architecture to do 30,000 TOPS (trillion operations per second). Nvidia emphasizes its GPU expertise as the basis for performing the environment assessment needed for self-driving cars. Huang pointed out that machine learning, essential for cars to recognize obstacles, advanced thanks to the GPU.

An AI that can read lips

As an interstitial development towards self-driving cars, Huang described a new platform from Nvidia called AI Co-Pilot. This system consists of sensors to detect a car's surroundings and cameras in the cabin to monitor the driver. This system gives a car many of the sensors and intelligence it would need for self-driving, but still relies on a human driver.

AI Co-Pilot would alert the driver of potential threats around their car, such as a fast-moving motorcycle. By tracking the driver's gaze, it can also warn of another car in a direction in which they're not looking.

One of the more remarkable capabilities of AI Co-Pilot is its ability to read lips, with what Huang claimed was 95 percent accuracy. Computer lip reading could make for far more accurate voice command.

Industry friends

For partnerships to bring this self-driving technology to production, Huang announced that Nvidia would be working with two digital mapmakers, Here and Zenrin. Here is a consortium owned by BMW, Audi and Mercedes-Benz, while Zenrin builds digital maps of Japanese cities. Nvidia previously signed deals with Chinese mapmaker Baidu and TomTom.

ZF and Bosch are both large automotive equipment suppliers which will begin using Nvidia's self-driving technology. ZF previously announced ZF ProAI, a self-driving platform using Nvidia technology, that it will offer to car and truck makers.

The Audi deal builds on previous partnerships between Nvidia and the German automaker. Audi has used Nvidia graphics chips to power its infotainment systems and instrument cluster displays.

Nvidia faces stiff competition in marketing its self-driving car technologies, but the automotive industry is likely to adopt self-driving rapidly over the next ten years, making for a great amount of opportunity.