Drive.ai gives us the autonomous car equivalent of beer goggles

It's fascinating to look through the sensors of a self-driving car, and see how much data it truly generates.

Drive.ai is an autonomous car developer that currently has a fleet of test vehicles in Texas. It recently published an article on its Medium page that goes into detail on how it processes data, how it visualizes it and how it's using deep-learning AI to speed up the conversion from raw data into usable information.

One of the things we found fascinating was the time scale that Drive.ai works against. Currently, for every hour of on-road autonomous car driving, it takes 800 human hours to process that data so Drive.ai's systems can understand it. The process is called annotation, and it's critical to being able to understand what happens on a drive. That's an almost insane difference, but the company hopes to dramatically reduce that figure and improve accuracy to 100 percent with artificial intelligence.

This visualization is meant for passengers of self-driving cars to see, it's kind of soothing in a way.

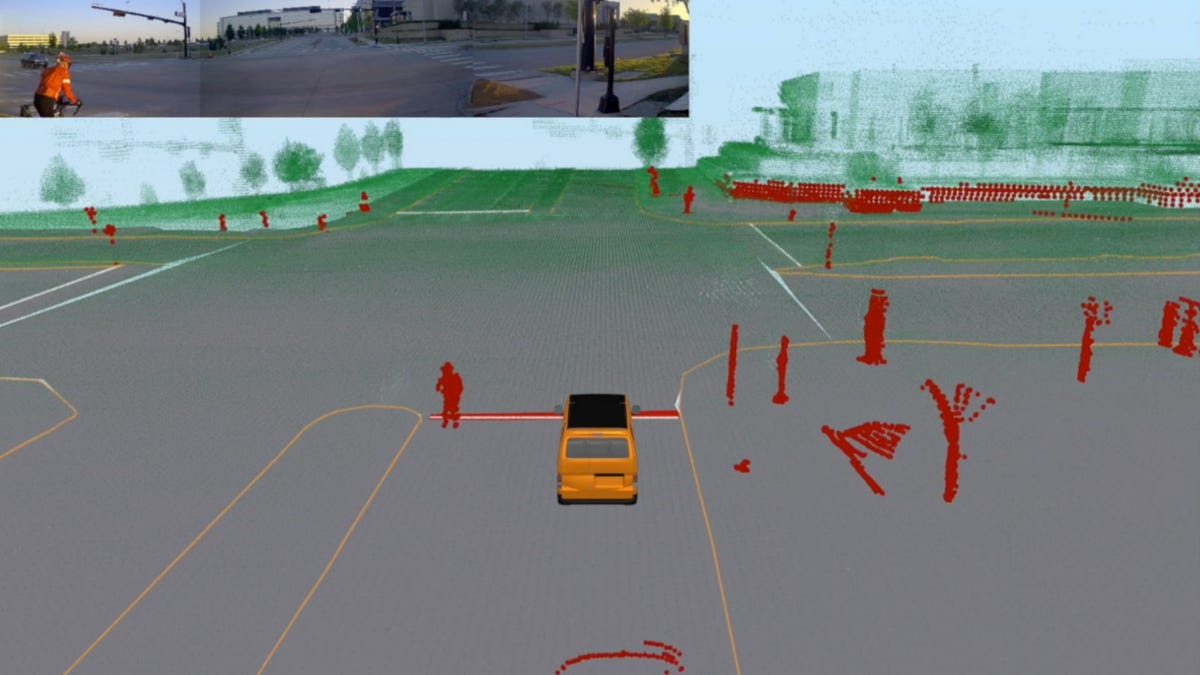

Another area where Drive.ai is doing exciting stuff is in the visualization of all the data that the vehicle generates. The first kind of visualization that it does is for the occupants of the car. Seeing just what the car sees in a recognizable, almost video game-like style is something that would put many nervous riders at ease.

Next, Drive.ai visualizes data in a very different way for analysis after a drive. Things like the point cloud, a series of data points in space generated by the vehicle and turned into a high-definition image by the Drive.ai team's computers, get checked for accuracy.

It's easy as lay people to say we understand the scope of the problems facing self-driving developers, but it's not until we get a peek behind the curtain, like the one Drive.ai has given us, that we truly understand it. It's not unlike putting a person on the moon, but instead of flinging someone into space, these developers are just trying to get your car to drive you safely to the mall.