Robots learn new tricks, take new shapes (photos)

Boston-area researchers show off the many shapes and purposes of robotics, including tiny robotic bees to pollinate fields, ostrich-like artificial limbs, and cookie-baking humanoid robots.

Shake and bake robot

Mechanical engineering student Mario Bollini shows off how the Willow Garage PR2 robot can be programmed to mix the ingredients and bake cookies at MIT's Computers Science and Artificial Intelligence Lab (CSAIL). The robot uses a Microsoft Kinect game console to find the bowls in front of it, grab them, and then mix ingredients in a process that takes about two and a half hours. By finding out where it makes mistakes, Bollini and fellow researchers hope to improve the motion plan it sets out for the robot and learn how robots could be used for more complex tasks, such as manufacturing. This slideshow will show a sampling of robotics projects at CSAIL and other Massachusetts universities. Also see related story, "With Microsoft Kinect, MIT robots see in 3D."

Quadrotor

The Robust Robotics group at MIT is designing a quadrotor able to enter environments--such as buildings after a natural disaster--and create a 3-dimensional image in software. The robot uses a Kinect controller (seen on bottom right) to send out an infrared signal. Based on the reflection, an onboard computer can start to build an image, point by point, of its surroundings. The sensors also allow it to move in a space without colliding into other objects in places where there isn't GPS for guidance.

Robotic wheelchair

Given the aging population in many countries, health care of older people could become one of the most useful applications in robotics in years to come. This autonomous wheelchair from MIT is designed to let people speak into a microphone to provide instructions on how to bring people around. The system, which uses the Kinect depth camera, could be used so that a person teaches the wheelchair how a building is laid out. Once a map of the building is built, the person in the wheelchair could then give instructions, such as "take me to my room." Students are testing the device at long-term residential care facility for people with neurological diseases, according to Seth Teller at MIT.

Updated on December 7 with correction regarding the type of facility where the wheelchair is being tested.

Ostrich leg

Ian Manchester, who works in the Robots Locomotion Group at MIT, shows a prototype of Fast Runner, a robotic leg inspired by an ostrich. Moving the top hip joint with a motor (or by hand) induces a kicking motion through the entire leg.

Building a point cloud

MIT researcher Maurice Fallon shows how an autonomous vehicle builds a picture of its environment point by point. Giving robots the ability to understand their environment is important to giving them more autonomy and can help people get a picture of dangerous situations, such as the inside of nuclear power plant accident. Researchers at MIT and other places are writing the algorithms and software to convert the data that sensors collect into useful images.

Box movers

This robot from the Robots Locomotion Group at MIT is designed to use information from its environment to move boxes. By using machine learning, the robot could, for example, push one box out of the way in order to pick up a desired box.

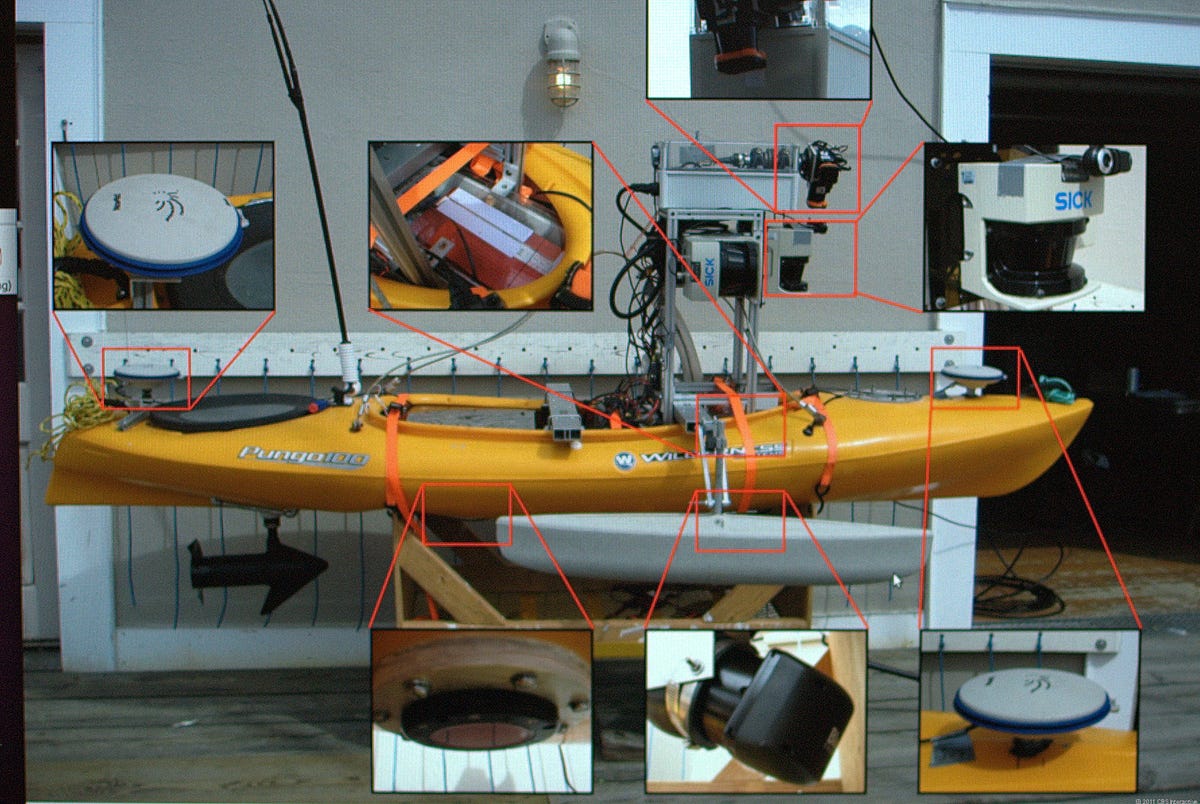

Autonomous kayak

This shows the sensor and communications equipment used to create an unmanned, or autonomous kayak, which uses GPS and Wi-Fi to communicate with other kayaks. The goal of the research is to write "collision avoidance software" where each kayak can detect others in the area and perform its task without collisions. The researchers hope that ultimately the software can be deployed in situations where different water vehicles that they haven't communicated with before can automatically detect each other.

Robot bees

One trend in robotics is to design robots that mimic biology, such as this one made at Harvard University's Wyss Institute for Biologically Inspired Engineering. The idea of this fly-sized robot is that they could be used to pollinate crops. A mass die-off in bees from bee colony collapse disorder is causing concern about food production.

Kilobots

Harvard University has developed a way to manage hundreds or thousands of tiny robots en masse, rather than only a few at a time. Through a licensing arrangement, these Kilobots (for thousands of robots) are now available for robot enthusiasts or researchers.

Bat copter

Boston University researcher Kenn Sebesta demonstrates a four-propeller helicopter that also communicates with a main computer and its controller via Wi-Fi. The Intelligent Mechatronics Laboratory at Boston University is focused on building algorithms inspired by biology, such as bats, to improve the ability of unmanned vehicles to navigate in military or disaster recovery applications.

Multi touch controller

Researchers at the University of Massachusetts in Lowell are creating a system that will allow operators of unmanned vehicles to control devices through a multi-touch tablet, rather than a joystick. By creating an alternate interface, the lab hopes to create a way to use robots in disaster response scenarios where multiple robots can be remotely controlled from a tablet or Microsoft Surface computer.

Bot doll

Among the many pieces of hardware at MIT's Robots Locomotion Group is this inspiring robot.