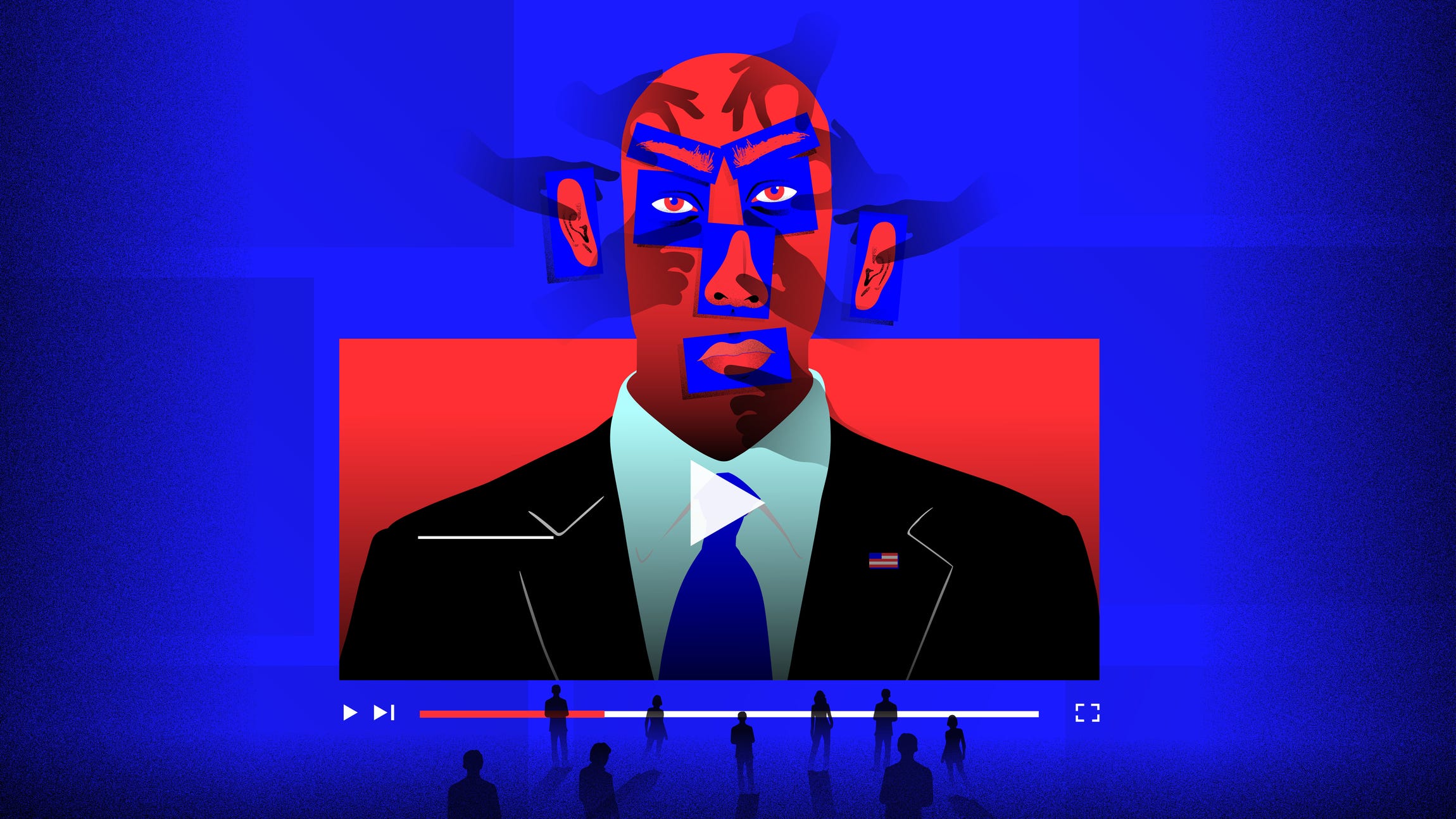

Deepfakes' threat to the 2020 US election isn't what you'd think

But if a devastating deepfake does emerge, it'll hit at the last moment.

Deepfakes are scary. But the good news for the US election is that experts agree a candidate deepfake is unlikely to screw up the 2020 vote.

The bad news: The mere existence of deepfakes is enough to disrupt the election, even if a deepfake of a specific candidate never surfaces.

One of the first nightmarish scenarios people imagine when they learn about this new form of artificial intelligence is a disturbingly realistic video of a candidate, for example, confessing to a hot-button crime that never happened. But that's not what experts fear most.

"If you were to ask me what the key risk in the 2020 election is, I would say it's not deepfakes," said Kathryn Harrison, founder and CEO of the DeepTrust Alliance, a coalition fighting deepfakes and other kinds of digital disinformation. "It's actually going to be a true video that will pop up in late October that we won't be able to prove [whether] it's true or false."

This is the bigger, more devious threat. It is what's known as the Liar's Dividend. The term, popular in deepfake-research circles, means the mere existence of deepfakes gives more credibility to denials. Essentially, deepfakes make it easier for candidates caught on tape to convince voters of their innocence -- even if they're guilty -- because people have learned they can't believe their eyes anymore.

And if somebody cunning really wants a deepfake to mess with our democracy, the attack likely won't be on one of the candidates. It would be an assault on your faith in the election itself: a deepfake of a trusted figure warning that polling sites in, say, black neighborhoods will be unsafe on election day, or that voting machines are switching votes from one candidate to another.

Manipulated media isn't new. People have been doctoring still images since photography was invented, and programs like Photoshop have made it a breeze. But deepfake algorithms are like Photoshop on steroids. Sophisticated video forgeries created by artificial intelligence, they can make people appear to be doing or saying things they never did.

Chances are you've probably seen a harmless deepfake by now. Millions have watched actor Jordan Peele turn Barack Obama into a puppet. Millions more have seen goofy celebrity face swaps, like actor Nicolas Cage overtaking iconic movie moments. The Democratic party even deepfaked its own chairman to hammer home the problem of deepfakes as the election approached.

That Democratic PSA falls right into the first line of defense against deepfakes, which is to educate people about them, said Bobby Chesney, a law professor at the University of Texas who coined the term Liar's Dividend in an academic paper last year. But the dark side of educating people is that the Liar's Dividend only grows more potent. Every new person who learns about deepfakes can potentially be another person persuaded that a legit video isn't real.

And that's the intersection where the US electorate finds itself.

The cry of fake news becomes the cry of deepfake news, Chesney said. "We will see people trying to get...more credibility for their denials by making reference to the fact, 'Haven't you heard? You can't trust your eyes anymore.'"

The reason deepfakes are such a new phenomenon, and the reason they're so effective at fooling the human eye, stems from the kind of artificial intelligence that creates them. This technology is known as GANs, short for generative adversarial networks. While artificial intelligence has been around for decades, GANs were developed only about six years ago.

Researchers created deepfakes that graft candidates' faces onto impersonators' heads, in order to test a system to debunk them.

To understand GANs, imagine an artist and an art critic locked in rooms next to each other. The artist creates a painting from scratch and slips it into the critic's room shuffled inside a stack of masterpieces. Out of that lineup, the critic has to pick which one was painted by his neighbor, and the artist finds out whether his painting fooled the critic. Now picture them repeating this exchange over and over again at hyperspeed, with the aim of ultimately producing a painting that even a curator in the Louvre would hang on the wall. That's the basic concept of GANs.

In this kind of deep machine learning, the artist is called a generator, the critic is called a discriminator, and both are neural networks -- AI models inspired by how the brain works. The generator creates samples from scratch, and the discriminator looks at the generator's samples mixed in with selections of the real thing. The discriminator judges which samples are real or fake and then sends that feedback back to the generator. The generator uses that guidance to improve its next samples, over and over again.

So no matter what type of media it is, GANs are systems designed to get better and better at fooling you. GANs can create photos, voices, videos -- any kind of media. The term deepfake is used most often with videos, but deepfakes can refer to any so-called "synthetic" media produced by deep learning.

That's what makes deepfakes hard for you to identify with the naked eye.

"If it's a true deepfake, then the uncanny valley won't save you," Chesney said, referring to the instinctive feeling of distrust when faced with a CG or robotic humanoid that doesn't look quite right. "If centuries and centuries of sensory wiring in your brain are telling you that's a real person doing this or saying that, it's deep credibility."

The birth of deepfakes has given rise to new terms: Cheapfakes. Shallow fakes. These are new ways to describe old methods of manipulating media. A popular example is the video of US House Speaker Nancy Pelosi that was slowed down to make her appear drunk. It's a simple, easy, cheap manipulation that's also effective, which makes it a bigger misinformation threat.

"Focusing on deepfakes is like looking through a straw," Ben Wizner, an attorney at the American Civil Liberties Union who is whistleblower Edward Snowden's lawyer, said in comments at a legal conference on deepfakes earlier this year. The larger problem, he said, is that huge majorities of people get critical information through platforms like Google, Facebook and YouTube. Those companies get rewarded with billions of advertising dollars for keeping your attention. But helping you become a more-informed citizen never grabs your attention as strongly as something inflammatory does.

The result is a system in which incendiary fakes thrive while sober truth suffers.

Deepfakes can exploit that system just like cheapfakes already do. But deepfakes are costlier and, because they're harder to make, far fewer people are capable of creating the highly convincing deepfakes that are the most difficult to debunk.

"So much of the focus on deepfakes in on electoral context," Sam Gregory, a program director with human-rights video organization Witness, said. A fixation on "the perfect deepfake" of a political candidate or world leader is the kind of disinformation that tends to stoke congressional hearings. But that overlooks meaningful harm already happening regular people, at an increasing scale, where even a poor quality deepfake is still deeply damaging.

Last month, for example, a researcher exposed a free, easy-to-use deepfake bot operating on the Telegram messenger app that has victimized seemingly millions of women by replacing in photos the clothed parts of their bodies with nudity. More than 100,000 women's photos -- manipulated to make the victims appear to be naked, without their consent -- had been posted publicly online, the researcher verified. An unverified counter ticking off the number of women's photos this bot has manipulated hit 3.8 million as of Election Day in the US.

Once a woman's image is simply and easily manipulated into nonconsensual sexual imagery, no matter the quality of that image, "the harm is done," Gregory said.

Those nonconsensual sexual photos are depressingly easy for anyone to make -- simply message the bot with the photo to manipulate. And with enough technological savvy and a powerful computer, people can use open-source deepfake tools to make those celebrity face swaps and lip syncs with Nicolas Cage.

But the kind of deepfakes that can do the most political damage need large data sets, very specific algorithms and significant computing power, Harrison said.

"There are certainly no lack of people who could make videos like that, but most of them are in academia and most of them are not trying to directly sabotage electoral democracy in the United States," she said.

But, ironically, academia is unintentionally feeding the Liar's Dividend. Most of our understanding of deepfakes comes from universities and research institutions. But the more these experts seek to protect people by educating them, the more they also widen the pool of people vulnerable to a liar's denial of a legit video, said Chesney, the coauthor of the Liar's Dividend paper with Boston University law professor Danielle Keats Citron.

"Everybody has heard about these now," he said. "We've helped plant that seed."

There are two possible remedies to the Liar's Dividend.

Deepfake-detection tools could catch up with the progress in deepfake creation, so debunking fake videos is quick and authoritative. But, spoiler: That may never happen. Or the public at large learns to be skeptical whenever a video appeals to whatever riles them up most. And that may never happen either.

Experts may not be distressed about a candidate deepfake disrupting the 2020 US vote, but other kinds of deepfakes could -- ones you might not expect.

"I don't think anyone's going to see a piece of video content, real or fake, and suddenly change their vote on Election Day," said Clint Watts, distinguished research fellow at the Foreign Policy Research Institute who testified to Congress last year about deepfakes and national security. "Trying to convince people Joe Biden touches people too much or whatever….I don't see how people's opinions can be really shaped in this media environment with that."

What worries him more are deepfakes that undermine election integrity -- like an authoritative figure reporting misinformation about turnout, polling site disruptions or voting machines changing your ballot.

Another worry: Deepfakes could destabilize the vote on US soil by causing havoc at a US outpost abroad. Imagine a fake that triggers an attack like the one on the US diplomatic mission in Benghazi, Libya, in 2012, which became a political flashpoint in the US. State actors like China or Russia, for example, could find an effective strategy in forged videos that endanger US soldiers or US diplomats, particularly in war-torn regions or countries ruled by a dictator, where populations are already struggling to separate truth from propaganda and rumor.

"If I were the Russians, I would totally do that," he said.

Russia, however, is less threatening on the deepfake front. Russia excels more at the art of disinformation -- like spreading fake news -- than the science of deepfakery, Watts said. But it's within reach for other state actors. China has completely deepfaked television anchors already, and countries in the Middle East have the funds to outsource disinformation campaigns to high-tech private companies.

No matter what form an election deepfake tries to take, the time to be on highest alert is right before you cast your vote.

"If it happens 48 hours out of the Election Day," Watts said, "we may not have a chance to fix it."

Originally published May 4, 2020.