MIT researchers use Reddit to create the first 'psychopath AI'

Yes, this is the same institute that makes the creepy dog robots that can open doors, but everything is totally fine, we promise.

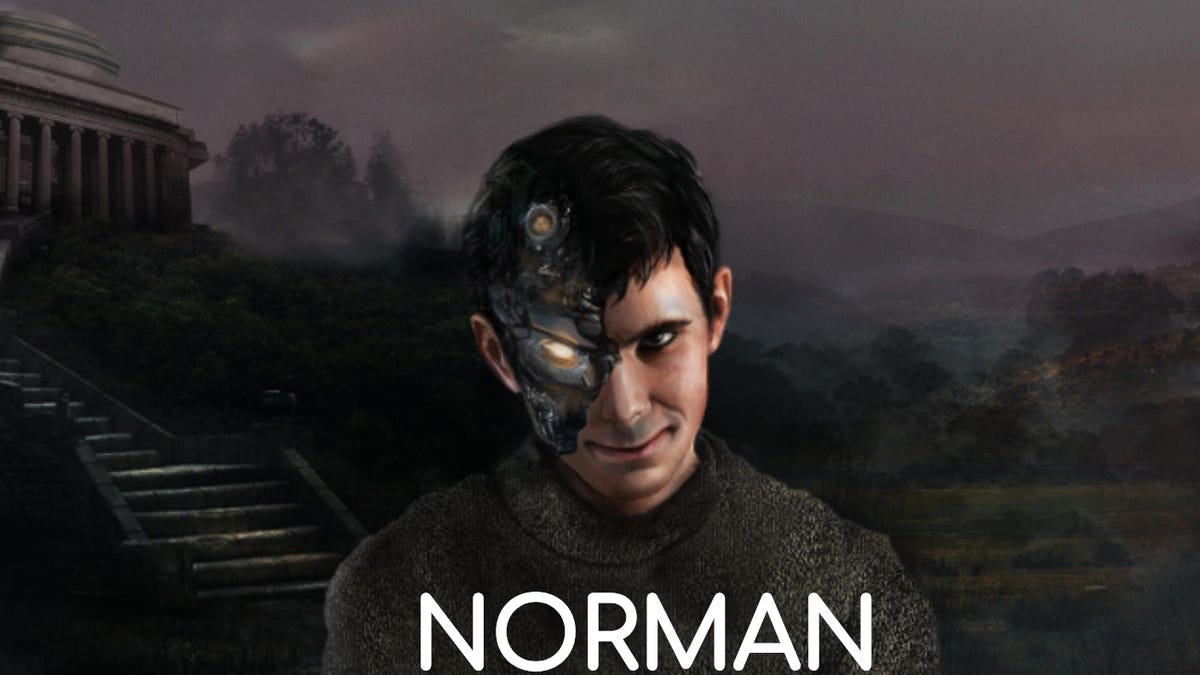

Meet Norman.

He's not your everyday AI. His algorithms won't help filter through your Facebook feed or recommend you new songs to listen to on Spotify.

Nope -- Norman is a "psychopath AI", created by researchers at the MIT Media Lab as a "case study on the dangers of artificial intelligence gone wrong when biased data is used in machine learning algorithms."

The researchers set Norman up to perform image captioning, a deep-learning method that generates a textual description of an image, then plugged him into an unnamed subreddit known for its graphic imagery surrounding death.

Then they had Norman explain a range of Rorschach inkblots, comparing the answers from their psychopathic AI with that of your friendly, neighbourhood "standard AI". Although Norman was originally unveiled on April 1, those answers are no joke -- they're highly disturbing.

Where a standard AI sees "a group of birds sitting on top of a tree branch" (awww!), Norman, our HAL-9000-esque death-machine, sees "a man electrocuted to death" (ahhh!). Where the standard AI sees "a close up of a wedding cake on a table", Norman, our malicious AI robokiller sees "a man killed by speeding driver".

The researchers didn't "create" Norman's "psychopathic" tendencies, they just helped the AI on its way by only allowing it to see a particular subset of image captions. The way Norman describes the Rorschach inkblots with simple statements does make it seem like it is posting on a subreddit.

But why even create a psychopath AI?

The research team aimed to highlight the dangers of feeding specific data into an algorithm and how that may bias or influence its behaviour.

That starts to make me wonder -- don't the MIT team at Boston Dynamics constantly push and poke and annoy their running, jumping and door-opening robot creations?

Are we doomed to be overrun by four-legged robo-hell-beasts? Let's hope not.

Tech Enabled: CNET chronicles tech's role in providing new kinds of accessibility.

Blockchain Decoded: CNET looks at the tech powering bitcoin -- and soon, too, a myriad of services that will change your life.