Fighting PTSD with virtual reality (pictures)

Researchers at USC's Institute for Creative Technologies are working on systems designed to use virtual humans in a variety of therapies. CNET Road Trip 2012 stopped by to check out the developments.

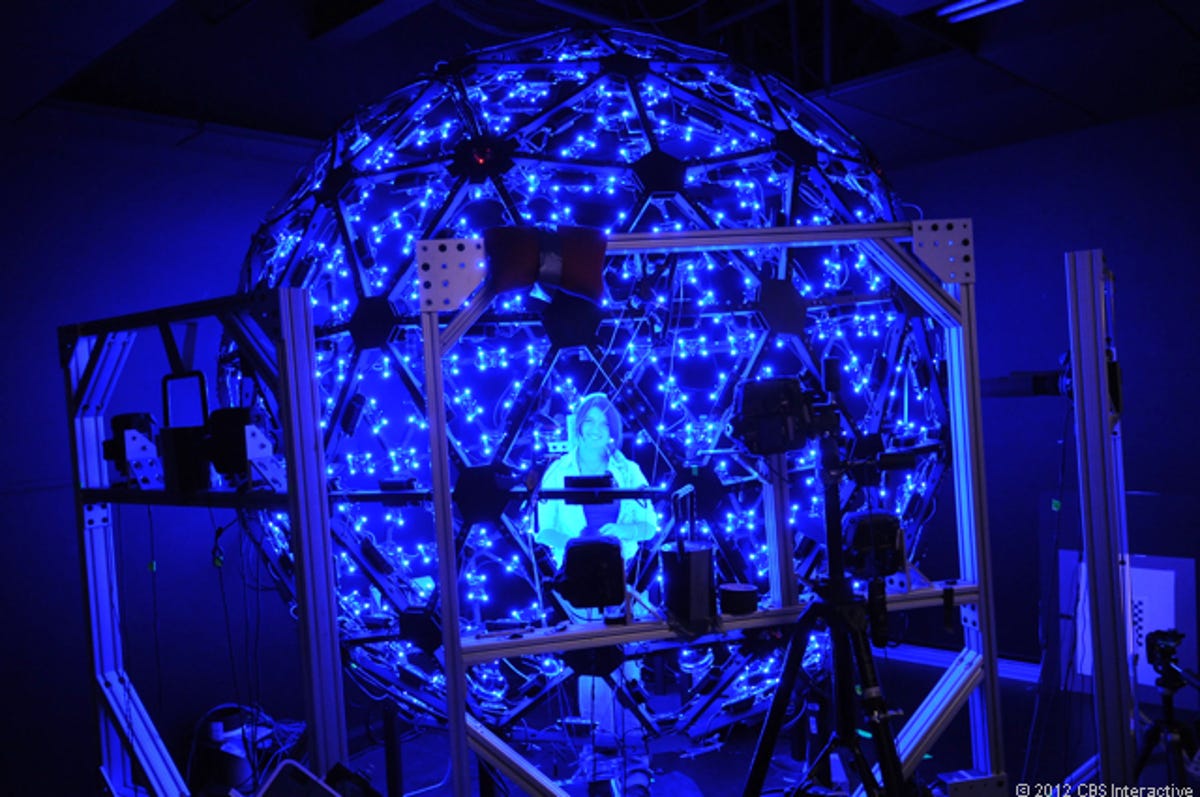

Blue dome

LOS ANGELES--As has been well-established since the start of the Iraq and Afghanistan wars, service members returning home after being in combat are struggling with post-traumatic stress disorder (PTSD) at levels never seen in the American military before.

This manifests in many different ways, from violence to poor behavior to alcoholism to domestic abuse and even suicide. The military has been trying to figure out how to address the issue, but to date hasn't made enough progress.

At the University of Southern California's Institute for Creative Technologies, however, researchers have been developing a variety of systems designed to attack service members' PTSD. In large part, the institute has been working with various forms of virtual reality and virtual humans to help these combat veterans deal with what's happening to them and those around them.

As part of Road Trip 2012, CNET reporter Daniel Terdiman stopped in at the Institute for Creative Technologies to see some of the projects being worked on there.

This dome, known as Light Stage X, employs dozens of special cameras and hundreds of LEDs to scan people's faces in order to create extremely realistic virtual or animated human faces. The result is good enough for Hollywood -- and films like "Avatar" have adopted the technology. But it is also being put to use to help develop technology that the military can use in therapy for its troubled service members.

Virtual Iraq/Afghanistan

This system, known as Virtual Iraq/Afghanistan, is designed to help service members suffering from PTSD to address the underlying issues causing their troubles.

Operated by a trained therapist who first gets to know the veteran, the technology is designed to let the service member relive events that are likely to be at the root of their PTSD. Working slowly and methodically, the therapist and the veteran discuss what might have been the cause, and then try to work through it.

The software is designed so that a wide variety of scenarios can be played out, from roadside bombs going off, to someone firing into a Humvee, to being on patrol at night using night vision, and much, much more.

Night vision

Virtual Iraq/Afghanistan is not meant to be the only therapy a veteran will use. But because it is often very difficult for service members to talk about the events at the root of their PTSD, the software, when directed carefully and methodically by a trained therapist, can gradually help the veteran relive the events, and in many cases, start to heal.

Gunslinger

This training system, known as Gunslinger, is a virtual reality project aimed not at attacking PTSD, but rather at helping service members prepare to deal with stressful combat situations where they will have to act very quickly -- and use their wits and negotiating skills -- to survive.

Though in this iteration at USC's Institute for Creative Technologies (ICT) is set up in a Wild West saloon scenario, it can also be run to look like a small venue in Afghanistan.

The idea is that a service member walks into the dimly-lit room and proceeds to carry on a conversation with a bartender -- a virtual agent programmed to respond to spoken cues. At some point, a "bad guy" arrives intent on taking over the situation, and the service member must figure out how to get the drop on the attacker. Otherwise, it will go badly.

Here, we see a researcher facing off with the bad guy, known as Rio Laine.

Dead

As seen on the screen in the "saloon," the researcher was not able to get the drop on Rio Laine, and was shot. Service members training with Gunslinger may have just half a second to shoot first, which is considered enough time to survive, although success rates have been getting lower recently, researchers say.

Paul with mirrorball

Paul Debevec, who heads up ICT's Graphics Lab, holds a mirrorball inside the Light Stage X dome. Light Stage is designed to create scans of people's faces far beyond what any other system can do, Debevec explained. It generates skin reflective properties that make the result look extremely realistic, and 16,000 pixels of detail from ear to ear. Though highly prized in Hollywood, the system can also be used to generate faces for virtual humans for military therapy projects.

Big Light Stage

This is a full-sized Light Stage dome used to create full-body scans rather than just facial scans. It utilizes (by coincidence) 6,666 LEDs in conjunction with dozens of cameras to create the scans.

Virtual human

One project that ICT has deployed to various military installations is called ELITE, or Emergent Leader Immersion Training Environment. The idea is to put young squad leaders through the training so that they can learn how to handle subordinate service members who are in some kind of trouble that might be related to PTSD.

Because talking about personal issues -- especially psychological ones -- is thought to be a sign of weakness by many in the military, lots of veterans are extremely hesitant to deal with the issues brought on my their experiences in combat. ELITE, and other versions of it, are meant to help train young officers to compassionately help struggling subordinates through hard times that may have seen them get in serious disciplinary trouble.

Many screens

With ELITE, one young leader at a time is put through the direct training. But whole classrooms of fellow young officers can watch and participate at the same time.

Working with a patient

ICT researcher Belinda Lange, a former physical therapist, developed a system that utilizes popular gesture control technologies like Microsoft's Kinect to help injured or struggling veterans -- and non-military patients, as well -- start to undergo rehabilitation therapy.

The idea is to create simple on-screen games that task the patients with moving their limbs to, say, "grab" a jewel out of the air. By repeating the process again and again, the system keeps track of patients' results, and helps their therapists understand their progress.

Belinda plays

ICT researcher Belinda Lange demonstrates her Kinect-based rehabilitation therapy game.

Patient in wheelchair

In this screen shot from an ICT video, a patient in a wheelchair successfully achieves some of his rehabilitation goals using Lange's software.

Wearing head-mounted display

Mark Bolas, the director of ICT's Mixed Reality Lab, wears a head-mounted display that is part of a project known as Redirected Walking that gives him -- or anyone wearing it -- a 140-degree view of a virtual reality combat scenario. The idea is to allow the wearer to walk around inside this virtual world, getting real-world cues, such as walking on gravel -- Bolas' lab has a long gravel path set up for people to walk along -- that can help them feel like what they're experiencing is more realistic.

Walking on gravel

In a demo of the Redirected Walking technology, the wearer of the head-mounted display sees a virtual representation of a Humvee and a building, and must walk around two walls and down a gravel path. The clever part of the demo is that the wearer walks into a side room and looks for something, and when they do so, they don't realize they are returning to the physical point in the lab where they started. So when they emerge from the "room" they are back on the gravel path.

However, while they think they are outside the room they have just gone into, they have moved to where the real gravel starts. This means that as long as they keep sending the wearer into side rooms to check on things, the researchers can make it possible for the wearer to keep going down the path for hundreds of feet, always on gravel, even though the actual gravel only covers 30 feet in the lab.

Map

This screen shows the wearer's view of the virtual desert scene, as well as a map that demonstrates the idea explained in the previous slide -- that researchers can create what appears to be a very long gravel path in the virtual scenario, despite there only being 30 feet of gravel in their physical lab.

Smile level

In Louis-Philippe Morency's ICT lab, he and other researchers are developing a system built around virtual human therapists who can interact with PTSD patients. The virtual therapist asks the patient questions, and then, by reading the patient's facial expressions and body language, it can understand how the person is actually feeling.

Talking to virtual therapist

ICT researcher Alesia Egan demonstrates how a "patient" would interact with the virtual therapist.

See the soldier

In the Mixed Reality Lab, this head-mounted system is part of a technology designed to create virtual humans that can walk directly up to a service member and try to intimidate them. The problem is that in order to be effective, the virtual human must look directly into the eyes of the service member. This is a very tricky problem, but Bolas' lab has come up with this system which has a projector on the helmet that sends out light that is then reflected back. In order to work, the system must know where the wearer is standing so that the virtual human's eyes are pointed directly at the wearer's.

Virtual Emily expressions

As part of a project called Virtual Emily, Paul Debevec's graphics lab used the Light Stage to create these 32 different facial expressions. Animators can then use those expressions as the base for just about any animation they want to put a virtual human in.

Climbing wall

In Jacquelyn Morie's lab at ICT, she and other researchers have been working on several simulations aimed at injured or troubled combat veterans that are built into the virtual world Second Life.

Second Life has long been a favorite place for those who, for example, can't walk, to get to walk again. Here, a researcher demonstrates how a combat veteran using the lab's Second Life simulation can go up a climbing wall by moving their real hands (while wearing special motion sensitive gloves).

ELITE dashboard

This is the dashboard for ELITE, which shows the real-time interaction between a young officer in training and the virtual subordinate. It also shows how a classroom of other officers answered the question that the primary student was addressing.

Sim Coach

Another system aimed at helping combat veterans suffering through PTSD is called Sim Coach. This Web-based service, however, is built for the veteran's family members or friends, and puts those people in front of a virtual coach who leads them through a series of questions and scenarios, and helps them to learn how to handle their troubled loved one.